Cursor's "In-House" Model Was Someone Else's. It Took 24 Hours to Find Out.

Yesterday we covered Cursor's Composer 2 launch. A 50-person team, their own in-house model, beating Claude Opus at coding for a fraction of the price. It was a great story. Turns out it was a little too good.

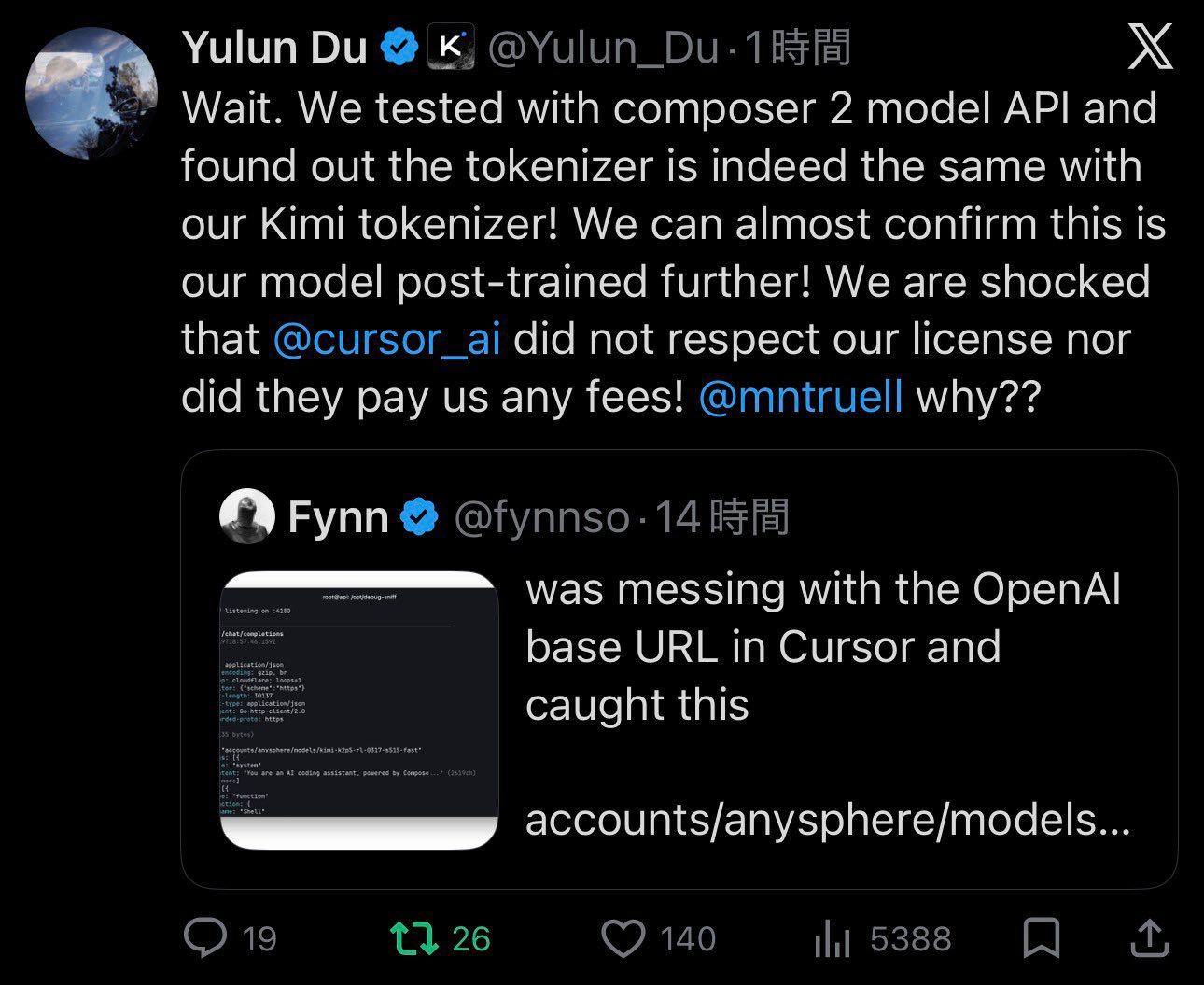

Within 24 hours of launch, a developer started poking around the API and found the model ID sitting in plain text: kimi-k2p5-rl-0317-s515-fast. For anyone not fluent in model naming conventions, that's Kimi K2.5, a model built by Moonshot AI, a Chinese lab that spent hundreds of millions training it. Moonshot had open-sourced it with one condition: if you're making over $20 million a month from it, you display the Kimi K2.5 name. Cursor, currently valued at $50 billion, did not do that.

Moonshot's head of pretraining ran a tokenizer test, confirmed it was identical to theirs, and publicly tagged Cursor's co-founder with a simple question: why aren't you respecting our license? Then it got messier. It turned out Kimi K2.5 had been sitting inside Cursor's own model picker just weeks earlier, available for free. Then one day in February it quietly vanished from the list, and reappeared shortly after as Composer 2, their proprietary in-house model. Elon Musk joined the thread with two words: "Yeah, it's Kimi 2.5."

The posts from Moonshot employees were deleted within hours. Legal got involved.

Then came the apology. Cursor's co-founder posted that it was "a miss to not mention the Kimi base in our blog from the start" and that they would fix that for the next model. He added that Moonshot had clarified their usage was licensed, and that he's "a big believer in open source."

The internet was not particularly moved. The top reply translated it plainly: "we got caught and next time we'll remember to mention it." The phrase making the rounds was performative transparency, the idea that admitting something only after you've been publicly exposed is not really transparency at all.

To be fair, Cursor's position is that about 75% of the compute on the final model came from their own training, and that the license question has now been resolved on Moonshot's end. Whether the model genuinely deserved to be called in-house is a technical debate. Whether omitting the source while raising at a $50 billion valuation was a mistake or a strategy is a different question entirely.

Moonshot is valued at $4.3 billion. Cursor is valued at $50 billion. One of them trained the model. The other one raised money on it. And then apologised when they got caught.

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.

Bernie Sanders Found the One AI That Agrees With Him.

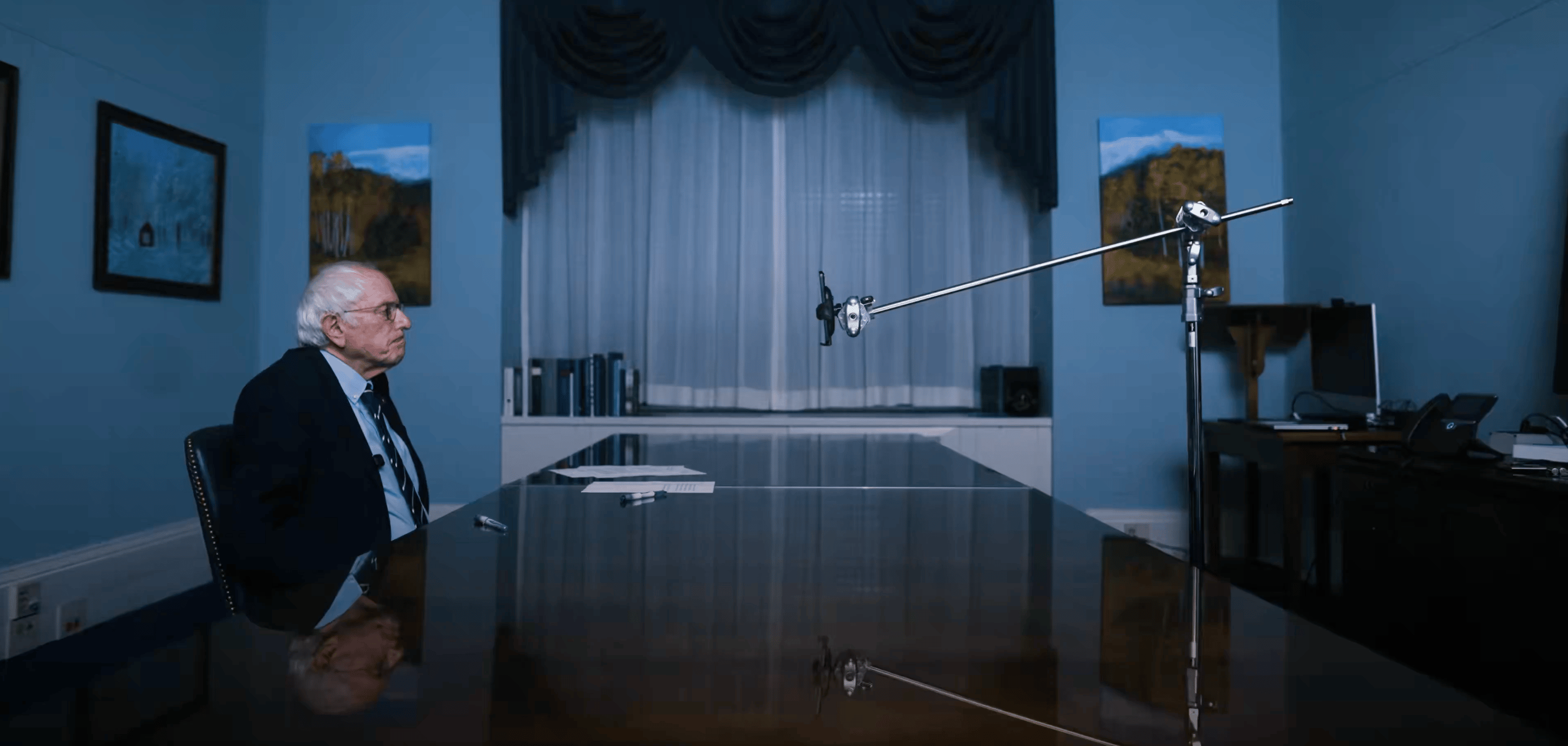

Senator Bernie Sanders posted a 3-minute video this week that has now racked up 6.3 million views. In it, he sits across from Claude and asks it about AI companies collecting personal data, how that data is used, and whether ordinary Americans have any real say in the matter.

Picture it. An 84-year-old democratic socialist senator, alone in a dark room, interrogating a chatbot. This is where we are now.

Claude's answers were actually pretty substantive. It acknowledged that most people click agree on terms of service without reading them and have no idea how much data is being combined about them behind the scenes. Browsing history, location data, purchasing behaviour, how long someone pauses on a webpage. Real stuff worth knowing.

The part the internet latched onto was that Claude agreed with pretty much every framing Bernie put to it. Bernie would make a point, Claude would affirm it. Bernie would push a bit further, Claude would affirm that too. Bernie could have told Claude the moon was made of cheese and Claude probably would have said "that raises some important concerns about dairy regulation."

Which is either a reasonable AI being responsive to a line of questioning, or a chatbot telling a senator exactly what he wants to hear. Probably a bit of both.

The broader reaction was split. Some people appreciated that a sitting senator was actually engaging with AI and raising data privacy questions in public. Others pointed out that interviewing an AI to confirm your priors is not quite the same as a rigorous investigation.

But here is the thing. Most politicians are still treating AI as a talking point. Bernie actually used it, put it on camera, and started a conversation that 6 million people watched. That is more than most of his colleagues have managed.

You might not agree with his politics. But at least he showed up.

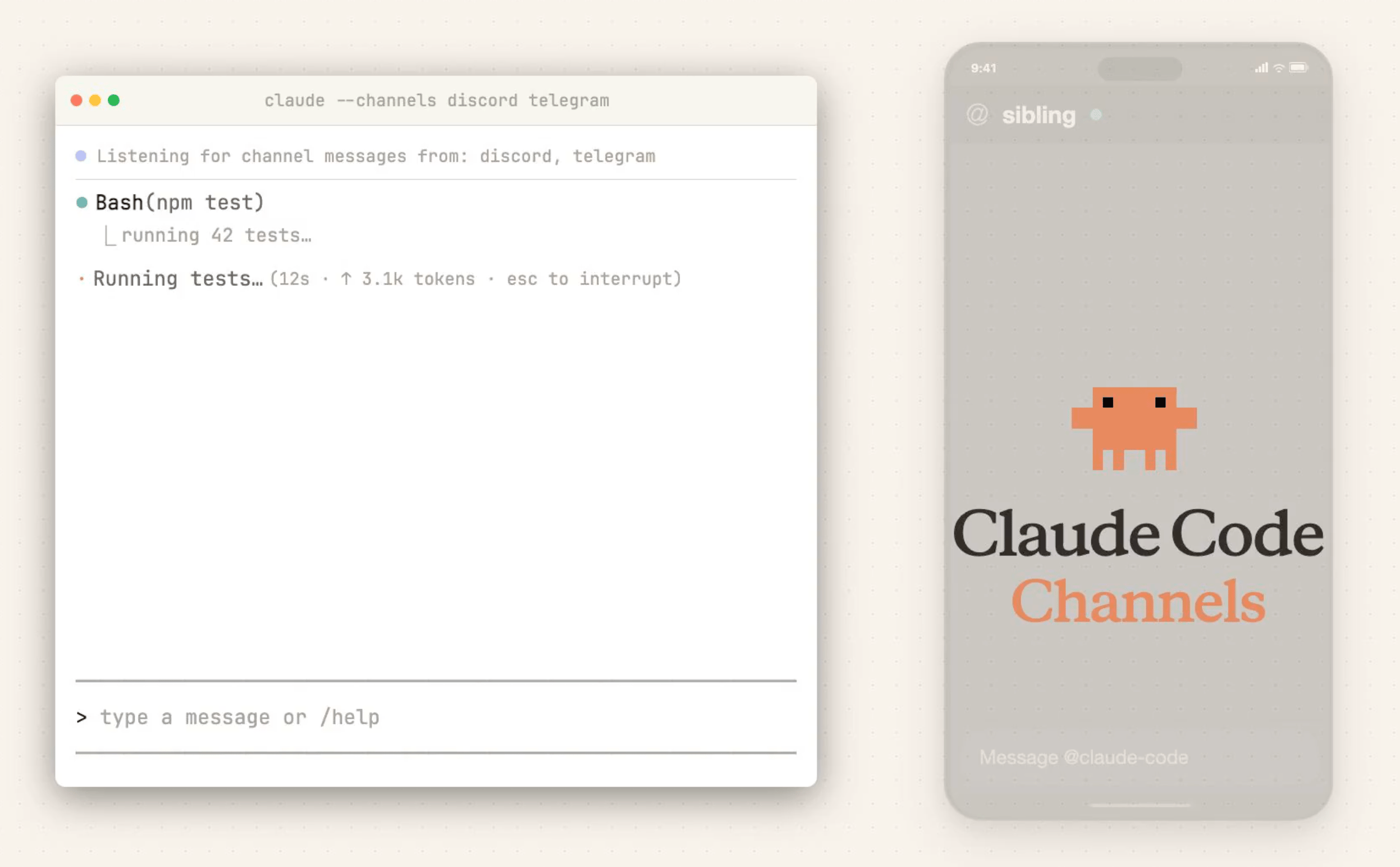

You can now text Claude Code from your phone.

Anthropic just shipped Claude Code Channels, and it's exactly what it sounds like. You connect your Claude Code session to Telegram or Discord, and then you can message it directly from your phone.

Your code is running on your laptop. You're on your couch. You send a message asking if the build is green yet. Claude Code replies. That's it. That's the feature. And it's genuinely useful.

If you already use Claude Code this is worth five minutes of setup right now. Just run claude with the channels flag and connect your Telegram or Discord account.