Gemini 3.2 Flash just appeared in the Gemini app.

Gemini 3.2 Flash briefly showed up in the Gemini iOS and Android apps for some users today, listed under the model selector as "All-around help." It disappeared quickly, but not before people got screenshots and started running tests. The brief appearance lines up with a broader pattern of signals that Google is preparing a significant pre-I/O drop.

Early impressions are promising. Testers found it performing surprisingly close to Gemini 3.1 Pro in reasoning while keeping the speed and low cost that Flash models are known for. It also showed strong results in 3D coding, SVG generation, and physics simulations. The knowledge cutoff appears to be January 2026, making it one of the more up-to-date models available if confirmed. Leaked pricing puts it at $0.25 per million input tokens and $2.00 per million output tokens, which would make it highly competitive on cost against both OpenAI and Anthropic's comparable tiers.

Gemini 2 Flash is already showing deprecation notices on Google's developer platform, signaling that the transition to the 3.x generation is actively underway. Updated Gemini 3 Flash models have also been spotted in testing on LM Arena, the independent benchmark where models compete head-to-head on real user preferences. Google I/O runs May 19 to 20, and interface logs point to a rollout right around or just before the event.

The working theory is that 3.2 Flash is the efficient everyday model Google ships ahead of the conference, while Gemini 3.5, which has been rumored as the headline announcement, gets saved for the main stage. If that is the structure, what appeared briefly in the app today is just the opening act.

A startup just claimed to build the fastest and cheapest frontier model ever.

A Miami-based startup called Subquadratic came out of nowhere today with a $29 million seed round and a model called SubQ that, if the claims hold up, would represent one of the most significant architectural breakthroughs in AI in years.

The pitch is this: standard AI models process every possible relationship between every word in a conversation, which gets extremely expensive and slow as context gets longer. SubQ claims to have built a new architecture that identifies only the relationships that actually matter and ignores the rest, using dramatically less compute as a result. The numbers they are putting forward are hard to believe on first read. 52 times faster than the current industry standard at one million tokens. A 12 million token context window, the largest ever claimed. Under 5% of the cost of Claude Opus. Available via API today for under $1.50 per million tokens.

The reaction online has been split directly down the middle. One side is calling it the biggest architectural leap since the Transformer, the foundational technology that powers every major AI model today. The other side is calling it AI Theranos, a reference to the infamous startup that made extraordinary medical claims that turned out to be false. There is no published research paper yet, which is the main reason skeptics are holding back.

The co-founder is an ex-Meta engineer who says this was built from the ground up by a team of top researchers. Early access is live at subq.ai. If anyone can verify the claims independently, this week just got a lot more interesting.

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

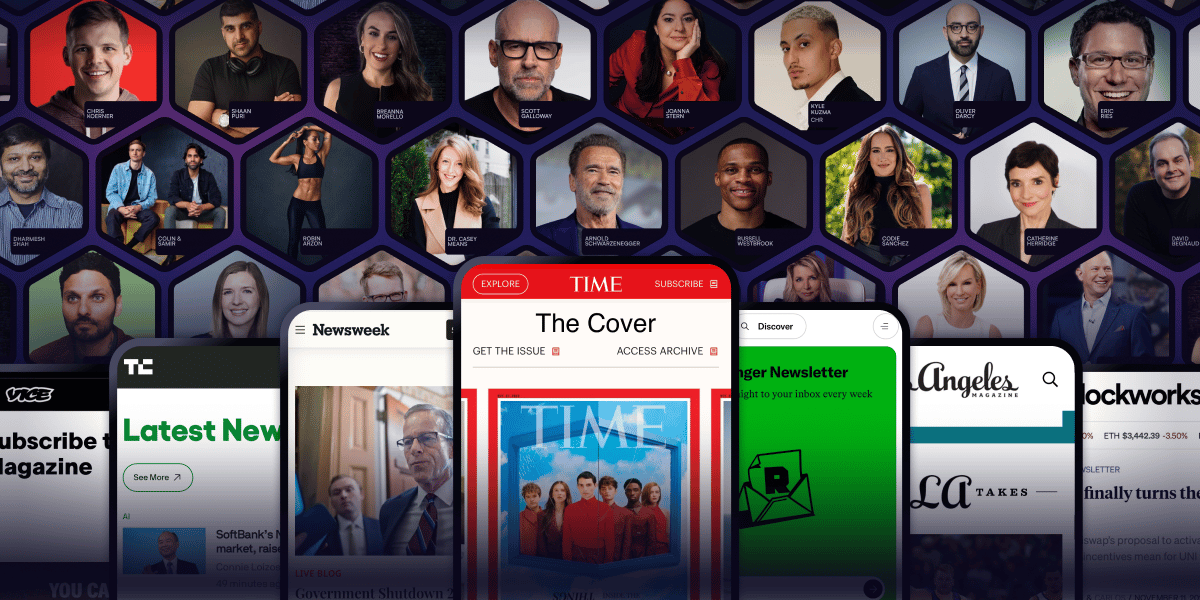

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

OpenAI is apparently building a phone.

Reports are circulating that OpenAI is accelerating development of its first AI agent phone, with mass production potentially starting in early 2027. The device is expected to run on a customized MediaTek chip built on TSMC's most advanced manufacturing process, with the camera system being the headline feature, designed specifically to improve how AI agents perceive and interact with the real world.

The timing makes sense strategically. OpenAI is heading toward an IPO and positioning itself as a consumer hardware platform alongside a model company changes that story considerably. If shipment projections hold, around 30 million units could move across 2027 and 2028.

Nothing is confirmed, but the race for AI native hardware is clearly heating up, and OpenAI does not appear to want to sit it out.