Google just told designers "everyone can do your job now." Designers had thoughts.

Today Google dropped a major update to Stitch, its AI design tool. Five big upgrades: an AI-native canvas, a smarter design agent, voice input, instant prototypes, and built-in design system support. You describe what you want, Stitch builds it. You talk to it, it iterates. Rolling out now.

The reaction was immediate and split pretty cleanly down the middle.

One camp loved it. Founders and engineers who have always had ideas but couldn't execute them visually now have a real tool. The blank-canvas problem, that paralysis of not knowing where to start, basically disappears. For that group, Stitch is a genuine unlock.

The other camp pushed back, and the best version of that argument came from @aiwith_sarah on X: "Everyone is a designer now the same way everyone is a writer with ChatGPT. Technically true. Practically misleading. The tool removes the execution barrier. It doesn't remove the need to know what good looks like. Access democratized. Judgment didn't."

That's the real tension. Stitch doesn't give you taste. It gives you speed. And if everyone can now produce designs instantly, the thing that separates good design from noise isn't the tool anymore. It's the eye behind it.

Vibe coding already changed who could ship software. Vibe design is next. The question is whether the output gets better or just more.

A Chinese AI just trained itself. Nobody touched it.

Today, MiniMax, a Chinese AI lab, just dropped M2.7 and the headline isn't the benchmarks. It's how the model was built.

MiniMax let M2.7 participate in its own training. The model built its own testing tools, ran its own experiments, analyzed the results, criticized its own performance, and used that feedback to improve itself. Researchers set the objective. The model figured out the rest. Over 100 rounds of autonomous self-improvement, no humans in the loop.

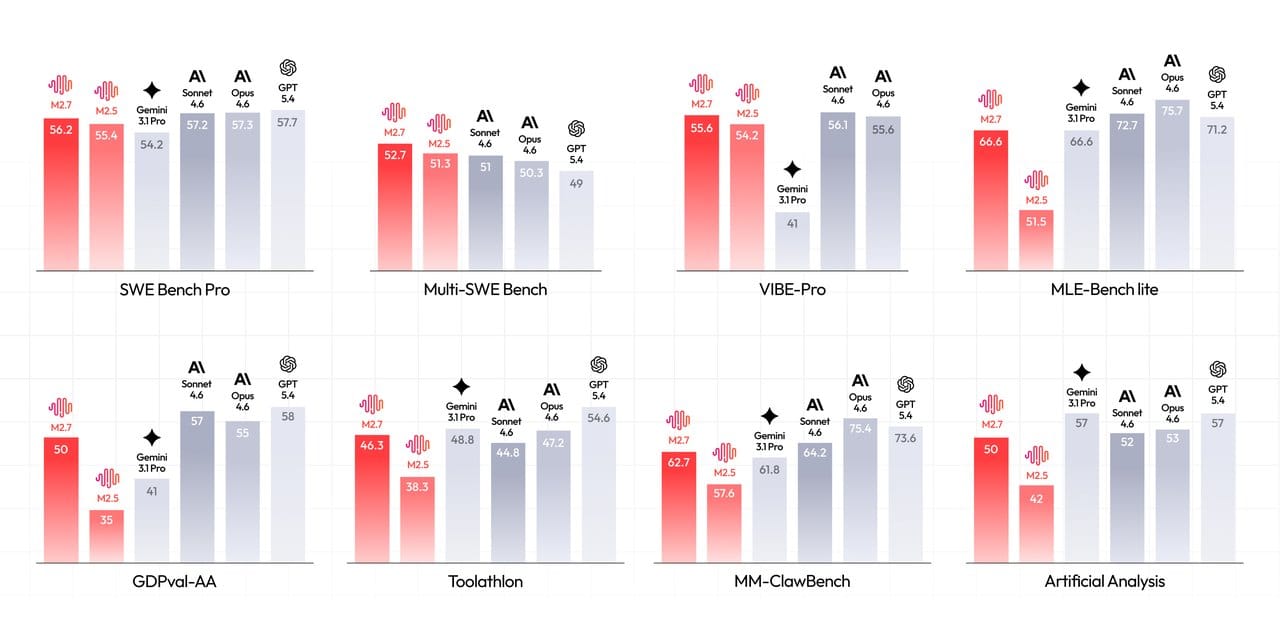

The results are hard to ignore. It now performs on par with Claude Opus 4.6 and GPT-5.4 on coding and reasoning tasks, beating Gemini 3.1 along the way. The model that just matched the best in the world did it by training itself. And it runs on a single consumer GPU, not a server farm.

Internally, M2.7 already handles a significant chunk of MiniMax's own AI research autonomously. Monitoring experiments, analyzing results, fixing its own code. The model is doing work that used to require their own researchers.

The bigger implication is what this means going forward. If models can meaningfully improve themselves without human input, the whole playbook for how AI gets built starts to look very different. We might be watching the early version of something that compounds in ways nobody has fully mapped out yet.

China used to lag on frontier AI. M2.7 just tied for second place. And it got there by teaching itself.

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.

Perplexity Comet is finally on iPhone.

After a one week delay from its original March 11 launch date, Perplexity's AI browser Comet is now live on the App Store.

The pitch is simple: your browser address bar is now an AI. Browse any page, ask questions about it, get summaries, book things, schedule things, all without switching apps or opening a new tab. You can also choose which AI model powers it, with options from OpenAI, Anthropic, Meta and others available.

It's free to download. Pro and Max subscriptions available if you want the deeper features.

Safari has had a good run.