GPT-5.5 is finally here and it lived up to the hype.

OpenAI officially launched GPT-5.5 today after weeks of leaks, stealth updates, and Sam Altman teasing something big was coming. The benchmarks are strong across the board and the model delivers on what the leaked outputs were hinting at all week.

It leads on agentic terminal coding, advanced math, web browsing, and cybersecurity, all while matching GPT-5.4's speed. That last part matters more than it sounds. Usually a smarter model means a slower one. GPT-5.5 pulls off both at once. But the detail that is getting the most attention from developers is the token efficiency.

GPT-5.5 uses significantly fewer tokens to complete the same Codex tasks as its predecessor. Tokens are essentially the unit of work an AI model bills you for, so using fewer of them to accomplish the same thing means the model is not just more capable, it is meaningfully cheaper to run at scale. For companies running thousands of automated tasks a day, that difference adds up fast.

The real world outputs that circulated during the stealth rollout told the story before the official launch did. Fully playable games, photorealistic SVG code, complex 3D scenes generated in a single prompt. The official benchmarks just confirmed what people had already been experiencing firsthand.

OpenAI has had an extraordinary week. Images 2.0 topped every leaderboard. Workspace agents launched. Chronicle gave Codex a memory. And now GPT-5.5 closes it all out. Whatever pressure Anthropic's Opus 4.7 put on them last week, consider it answered.

Anthropic just gave Claude agents a long-term memory.

Memory on Claude Managed Agents is now in public beta, letting agents carry what they learn from one session into the next.

The way it works is straightforward. Memories are stored as regular files, which means developers can read them, edit them, export them, and stay in full control of what the agent actually retains. Multiple agents can share the same memory store, so if one agent figures something out, others can benefit from it too. There are also audit logs tracking every change, so you can always see what an agent learned and roll it back if needed.

The early results from enterprise teams using this are notable. Rakuten's agents cut first-pass errors by 97% after being able to learn from previous sessions. Wisedocs sped up their document verification pipeline by 30% once agents started recognizing recurring issues they had seen before.

The timing is worth noting. Yesterday OpenAI launched Chronicle, which watches your screen to build Codex's memory. Today Anthropic launched persistent memory for enterprise agents. Both companies are arriving at the same conclusion from different directions: an AI that forgets everything after every session is fundamentally limited, and the next frontier is making them actually learn over time.

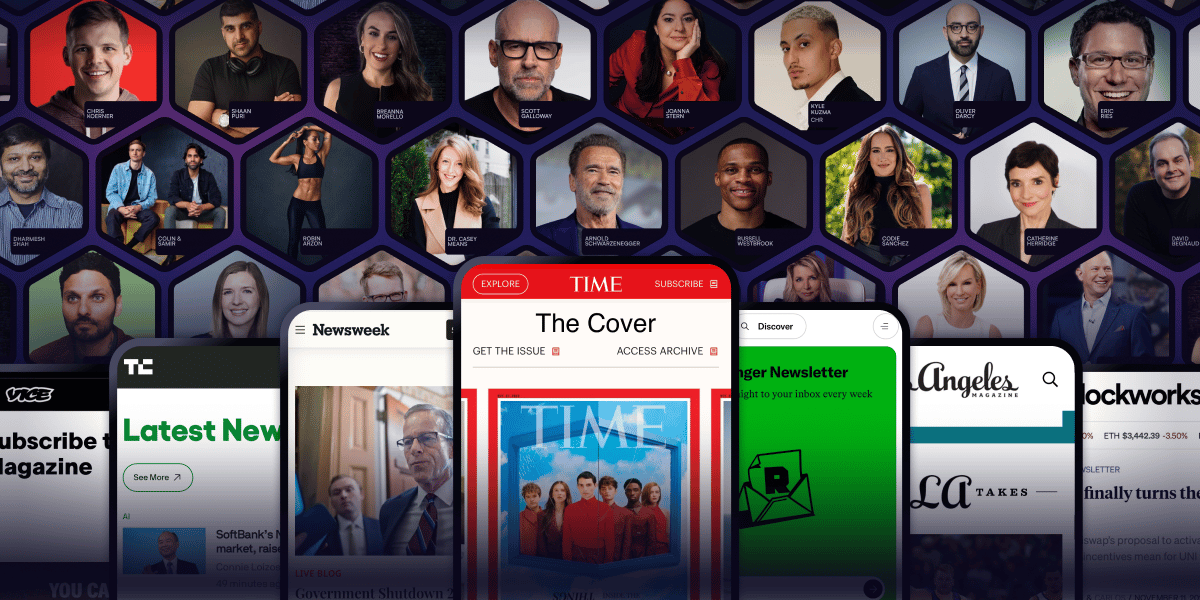

Arnold Schwarzenegger has a newsletter.

Yeah. That Arnold Schwarzenegger.

So do Codie Sanchez, Scott Galloway, Colin & Samir, Shaan Puri, and Jay Shetty. And none of them are doing it for fun. They're doing it because a list you own compounds in ways that social media never will.

beehiiv is where they built it. You can start yours for 30% off your first 3 months with code PLATFORM30. Start building today.

Codex just learned to work without asking permission.

OpenAI launched Auto-review in Codex today, a mode designed for long-running tasks where constant approval prompts slow everything down. Instead of stopping to check in at every step, Codex keeps moving through tests and builds on its own, while a separate agent quietly reviews the higher-risk actions in context before they execute.

It is a small but telling update. The direction every major AI lab is moving is the same: less human babysitting, more autonomous execution. The question of how much you trust the agent to make the right call is becoming less theoretical by the week.