OpenAI's former CTO just showed the world what she has been building.

Mira Murati left OpenAI in 2023 after serving as its CTO, founded Thinking Machines Lab shortly after, raised a record amount for a new AI lab almost immediately, and has been quiet ever since. Today, that silence ended.

What Thinking Machines released is not a chatbot. It is what they are calling an interaction model, and the distinction matters. Every major AI assistant today works in turns. You speak or type, it waits, then responds. Thinking Machines built something that works the way people actually communicate with each other, continuously. The model perceives audio, video, and text at the same time, processes them in 200 millisecond chunks, and responds in real time without waiting for you to finish. It can interject when you say something wrong, translate live as you speak, count your pushups by watching you, and search the web while simultaneously holding a conversation, all without the person on the other end noticing it is doing multiple things at once.

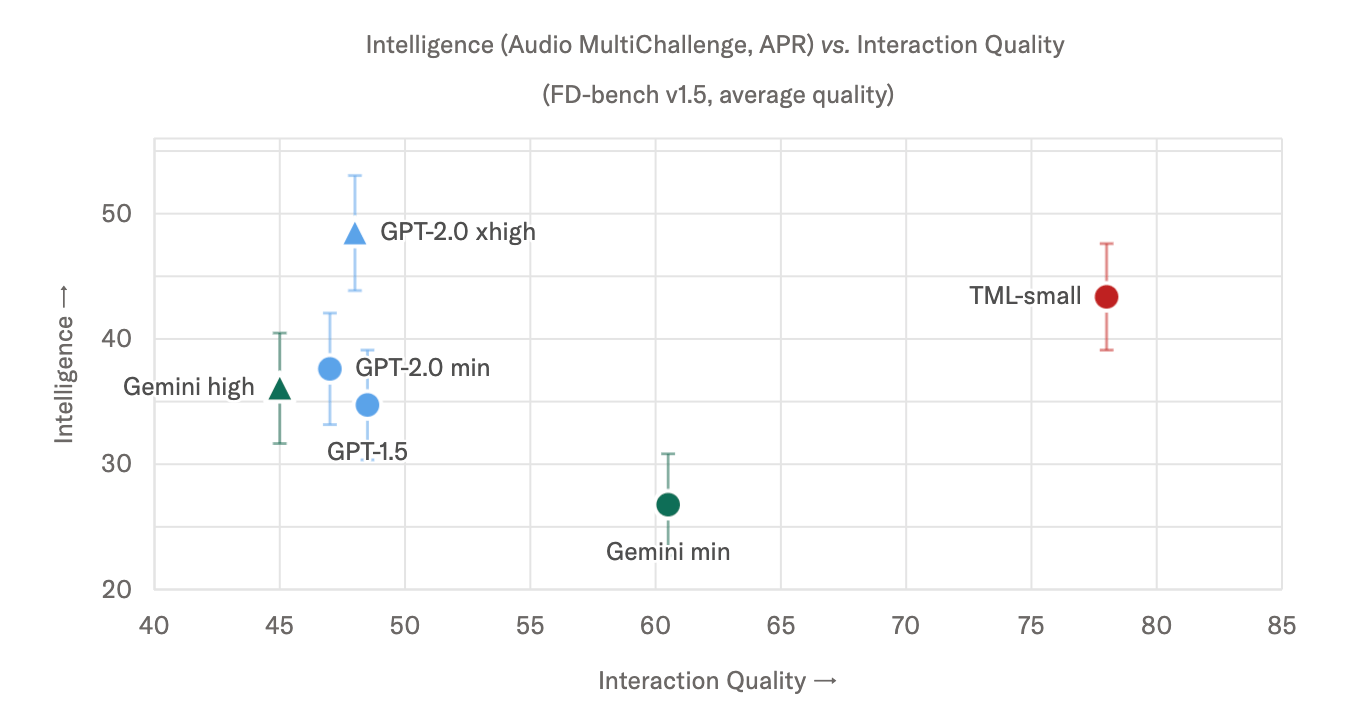

The benchmarks back up the demo. On interaction quality tests, TML-Interaction-Small leads every model measured, including GPT Realtime-2.0 and Gemini. On intelligence benchmarks it sits comfortably among the top non-thinking models. The model is a 276 billion parameter architecture with 12 billion active at any time, and Thinking Machines says larger versions are coming later this year.

This is a research preview, not a consumer product yet. But for a lab that has said almost nothing since it was founded, this is a significant first statement about what they believe AI collaboration should actually feel like.

Google's video AI just leaked ahead of I/O.

Gemini Omni, Google's upcoming video generation model, has started appearing in early app tests ahead of Google I/O next week. Testers can remix videos, edit clips through conversation, and use templates, all from inside Gemini without switching tools. Early demos show smooth camera movements, consistent scenes across cuts, and voice quality that reportedly edges out Veo 3.1 in certain areas.

The honest early verdict is that the visual side is genuinely impressive, especially the consistency across scenes, which has historically been one of the hardest problems in AI video. The audio is another story. Early testers noted it still has that unmistakable Google voice quality, the kind you can identify immediately without being told what made it. Clips are also capped at 10 seconds and there are occasional motion glitches.

For a model that has not been officially announced yet, the fact that it is coherent enough to be demoed at all is a good sign for what Google has planned for I/O next week. You can watch one of the early generated clips here.

OpenAI accidentally confirmed something is coming to Codex.

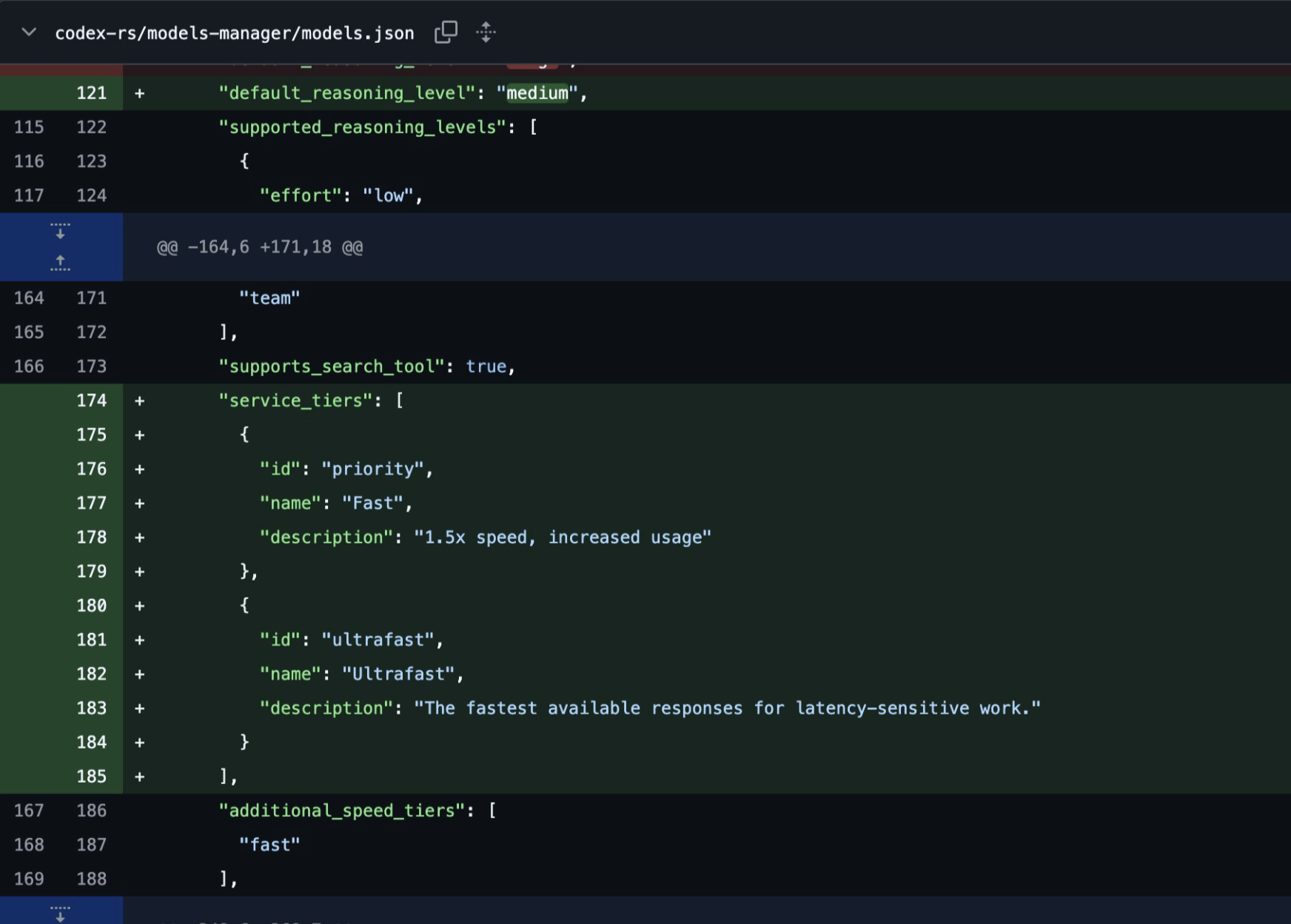

A reference to an "Ultrafast Mode" briefly appeared in OpenAI's Codex GitHub repository this week, described as offering the fastest available responses for latency-sensitive work. Then it disappeared. The deletion was faster than the original post, which in AI leak culture is basically a confirmation. If it was nothing, you leave it. If it matters, you scrub it.

The implication is that OpenAI is working on a new speed tier for Codex aimed at tasks where response time is the priority over depth of reasoning. Think real-time coding assistance, instant autocomplete, or workflows where waiting even a few seconds breaks the experience. It fits the broader direction Codex has been moving, from a powerful but occasionally slow agent to something that wants to feel instantaneous.

No timeline, no official word, just a deleted description and a lot of people who saw it before it was gone. That tends to be how the most interesting OpenAI announcements start.