Google took vibe coding to the next level.

If you haven't heard the term: vibe coding is when you describe what you want to build in plain English and AI writes the code for you. No syntax, no Stack Overflow, no engineering degree. Just describe the idea and watch it appear.

Up until now, vibe coding has had a ceiling. You could describe something, get a decent frontend, maybe some basic logic. But the moment you needed a real database, authentication, live data, or payments, you were back to doing it yourself.

Google just removed that ceiling.

They announced a full-stack vibe coding experience inside Google AI Studio, powered by the Antigravity coding agent and Firebase backends. You describe what you want to build, and the agent builds it, including the backend. Real databases, real authentication flows, real connections to live services and payment processors. Not a prototype. An actual working app.

What’s most notable is that the agent keeps working even when you're not there. It maintains context about your project, remembers where you left off, and completes tasks in the background. You come back to finished work.

To show what's possible, Google demoed Geoseeker, a full-stack multiplayer app with real-time state management, compass logic, and a live Google Maps integration. Built entirely inside AI Studio through natural language.

This is Google's direct answer to Cursor and Claude Code. And it's arguably the most complete vibe coding environment anyone has shipped. The tools that used to require a full engineering team are now a text box.

A year ago you needed five engineers to ship something like this. Now you need a description and a weekend.

Anthropic interviewed 81,000 people about AI. What they found is worth sitting with.

Last December, Anthropic ran the largest qualitative AI study ever conducted. They used an AI interviewer to have open-ended conversations with over 80,000 Claude users across 159 countries and 70 languages. Not a multiple choice survey. Actual conversations about hopes, fears, and real experiences with AI.

The results are hard to summarize cleanly because they don't fit a clean narrative. AI is helping people in ways that are genuinely moving, and scaring them in ways that are equally real, often in the same person at the same time.

On the hope side: 81% said AI had already taken a meaningful step toward their stated vision. The quotes behind that number are striking. A healthcare worker in the US who got properly diagnosed after nine years of misdiagnoses because Claude connected the dots. A soldier in Ukraine using AI to stay mentally afloat during shelling. A lawyer in India who spent years afraid of Shakespeare and math, and recently read fifteen pages of Hamlet and started learning trigonometry again. A butcher in Chile with no tech background who built a business with Claude and said he now sees no limits.

On the fear side, the top concerns were unreliability, job loss, and losing human autonomy. But the most honest finding was this: the same people who love what AI does for them are often the ones most afraid of what it's doing to them. The person relying on AI for emotional support is three times more likely to also fear becoming dependent on it. The student getting better grades is also the one noticing they're thinking less.

The tension isn't between AI optimists and AI pessimists. It's inside every individual person using it.

One quote that stuck: "Thinking was the last frontier."

Go read the full thing at anthropic.com. It's one of the more honest pieces of writing about AI that's come out in a while.

Every headline satisfies an opinion. Except ours.

Remember when the news was about what happened, not how to feel about it? 1440's Daily Digest is bringing that back. Every morning, they sift through 100+ sources to deliver a concise, unbiased briefing — no pundits, no paywalls, no politics. Just the facts, all in five minutes. For free.

A 50-person company just beat the big labs at coding.

Cursor dropped Composer 2 today and the headline is that it's their own model. Not Claude. Not GPT. Built in-house by a team of around 50 people.

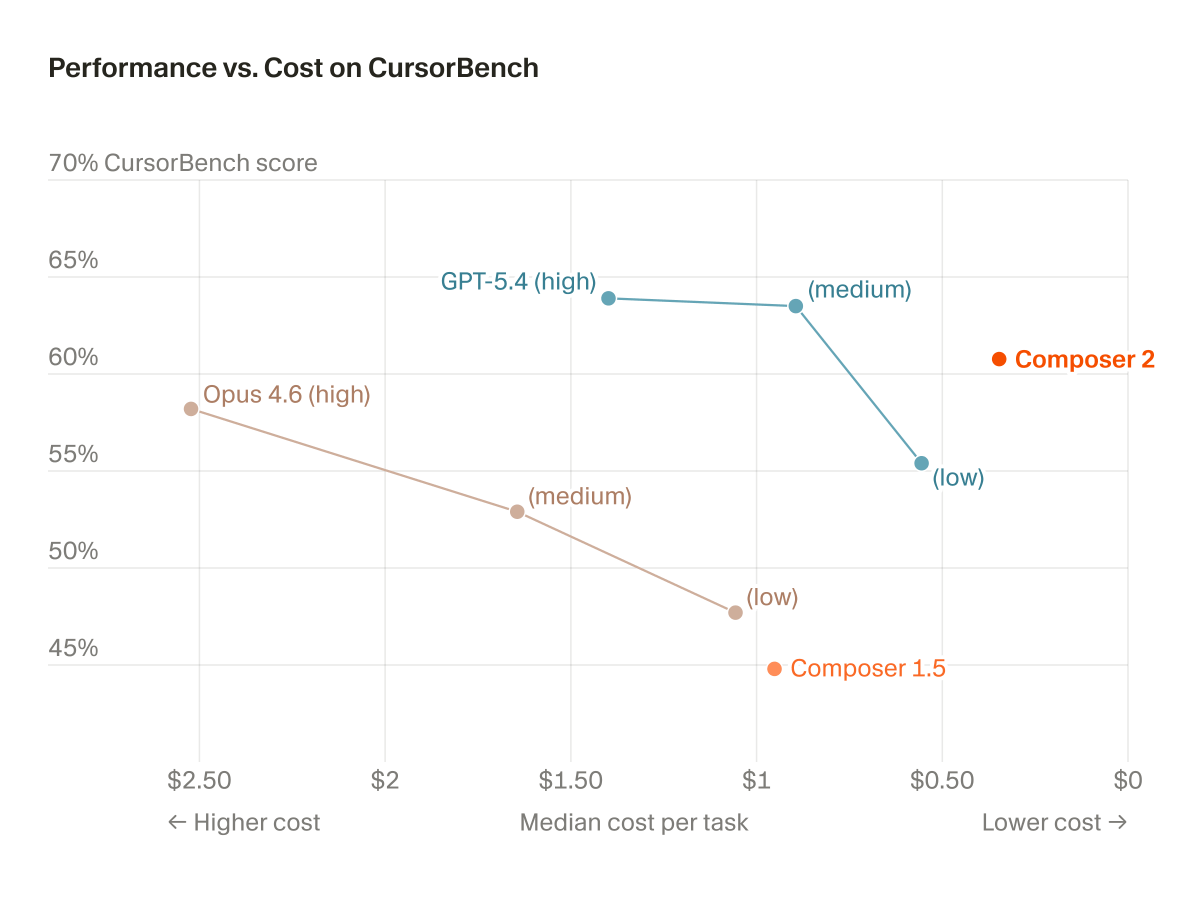

On their coding benchmark, it scores higher than Claude Opus 4.6 at less than half the cost of running the frontier models. The chart they posted tells the whole story. Composer 2 sits alone in the top right corner. High performance, low cost. Everything else is either more expensive or less capable.

It's a paid developer tool so most people reading this won't use it directly. But the signal matters: a small team just out-coded the biggest labs at their own specialty. That's not supposed to happen.

The gap between a 50-person startup and a $30 billion lab just got a lot smaller.